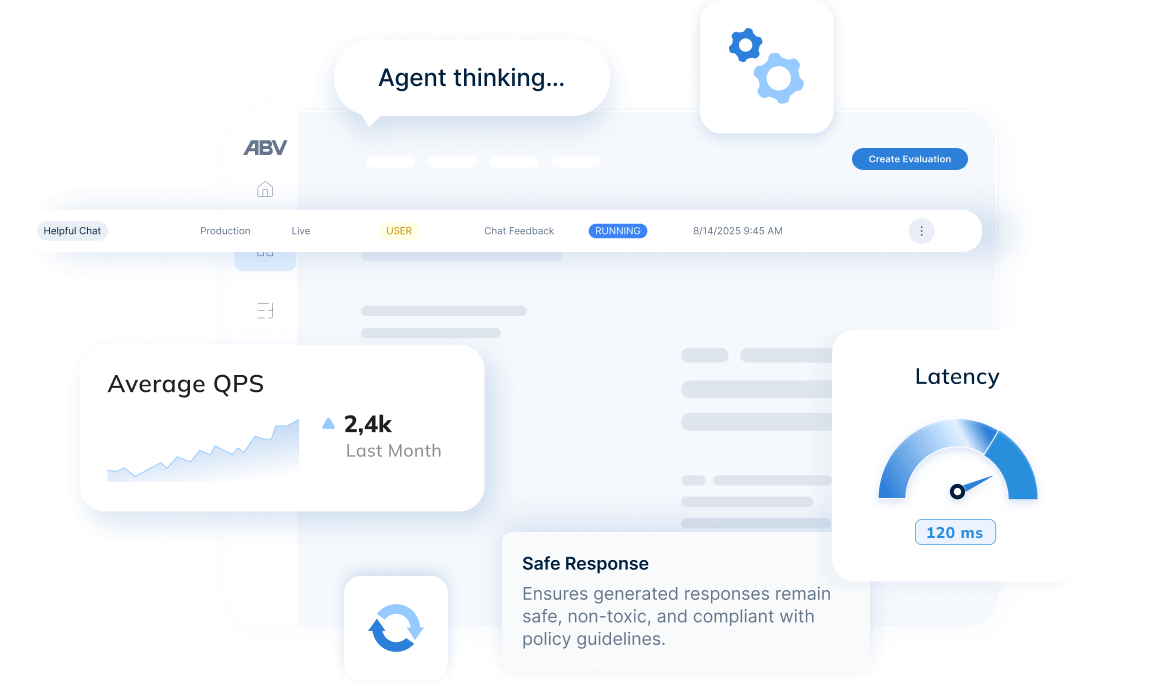

Full insyn och spårbarhet i agentbaserad AI

Spåra komplext resonemang, verktygsanvändning och autonoma beslut i produktion. ABV ger end-to-end-insyn i agentbaserade arbetsflöden som traditionella lösningar inte klarar av.

- Förutsägbara beslutsvägar

- Regelbaserade arbetsflöden

- Fast beteende

- Databasfrågor

- Nätverksanrop

- Prestandamått

- Lagrings-I/O

- Icke-deterministiska utdata

- Resonemangsloopar i LLM:er

- Dynamiskt verktygsval

- Spårning av flerstegsresonemang

- Sekvenser av verktygsanrop

- Tokenanvändning och kostnader

- Traditionella infrastrukturloggar

Säkra AI-agenter före driftsättning

Validera skydd och motståndskraft i AI-agenter med automatiserad angreppstestning

Genomför omfattande attacksimuleringar för att identifiera och åtgärda sårbarheter i era AI-agenter innan de tas i produktion.

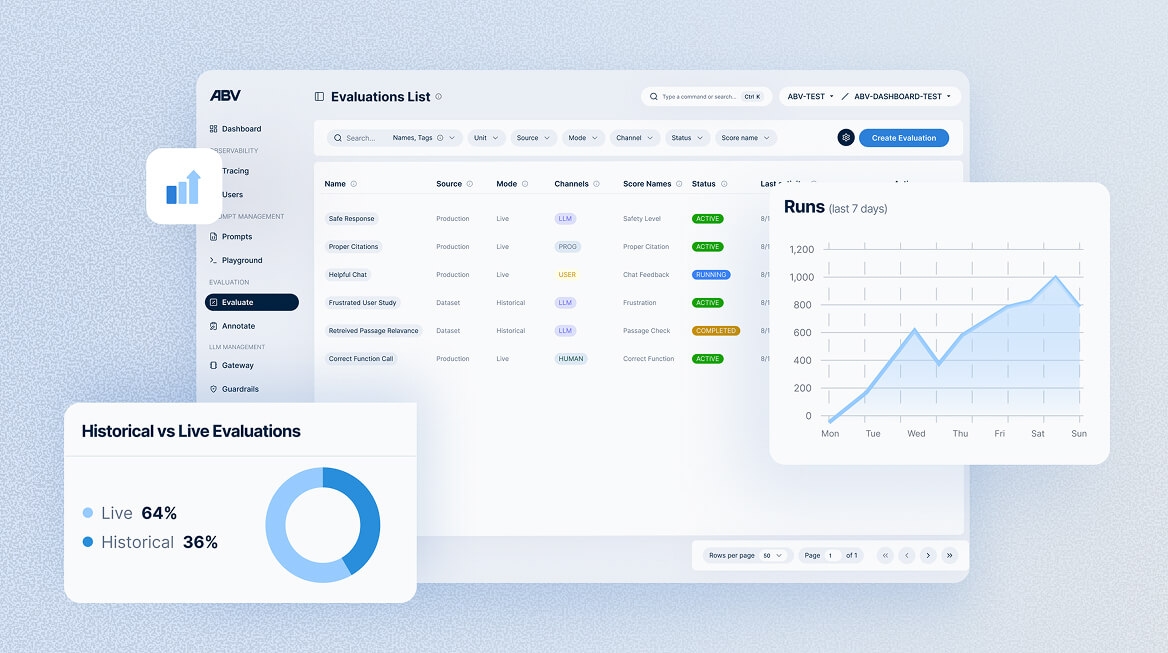

Få samlad och detaljerad insyn för att förstå systemets prestanda och beteende i varje lager

- Applikation

- Session

- Agent

- Spår

- Delspår

Kompatibelt med alla agentramverk

Integrera ABV sömlöst med OpenTelemetry, LangGraph, Bedrock, Strands Agents, ADK eller er egen agentbaserade stack.

OpenTelemetry-integrationen (OTEL) gör det möjligt för team att behålla sin befintliga AI-pipeline och samtidigt vara kompatibla mellan olika system.

Frågor och svar

Observabilitet för AI-agenter innebär att hela beslutsprocessen i agentiska AI-system kan följas i detalj. Till skillnad från traditionell övervakning, som främst loggar in- och utdata, fångar agentisk observabilitet hur beslut faktiskt fattas. Det omfattar resonemang i flera steg, val av verktyg, mellanliggande beslut och det sammanhang som låg till grund för varje åtgärd. Det inkluderar även spårning av LLM-anrop, funktionskörningar, informationshämtning och hur agenter samordnar sitt arbete.

Traditionella applikationer följer deterministiska, förprogrammerade flöden. Givet samma indata producerar de i regel samma utdata. Agentiska system använder däremot stora språkmodeller för att fatta kontextberoende beslut. De kan välja olika verktyg, strategier eller åtgärder baserat på situation, mål och omgivande signaler. Det innebär att samma indata kan leda till olika utfall, vilket ställer högre krav på insyn och spårbarhet för att förstå varför ett visst beslut fattades.

Single-agent-system använder en enskild AI-agent för att lösa uppgifter, antingen stegvis eller genom att delegera arbete till verktyg. Multi-agent-system samordnar flera specialiserade agenter som samarbetar, delar information eller fördelar ansvar för att lösa mer komplexa problem. Sådana arkitekturer kräver observabilitet som kan följa kommunikation mellan agenter, överlämningar av uppgifter och distribuerat beslutsfattande.

Exempel på agentiskt beteende är:

- Dynamiskt verktygsval: Agenten väljer mellan till exempel kalkylator, webbsökning eller databasfråga beroende på uppgiften.

- Resonemang i flera steg: Agenten planerar och genomför en följd av åtgärder, till exempel: ”sök dokumentation → testa kod → sammanställ resultat”.

- Självkorrigering: Agenten identifierar fel i sitt eget svar och försöker igen med en annan strategi.

- Adaptiv informationshämtning: Agenten avgör när mer kontext behövs och hämtar relevant information från kunskapskällor.

- Måluppdelning: Agenten bryter ner komplexa förfrågningar i deluppgifter och orkestrerar deras genomförande.

Agentisk RAG innebär att AI-agenter själva avgör om, när och hur extern information ska hämtas. I stället för att alltid söka i en förutbestämd kunskapsbas utvärderar agenten behovet av ytterligare kontext och väljer lämpliga datakällor, till exempel vektordatabaser, API:er eller söktjänster. Agenten kan också avgöra om den hämtade informationen är tillräcklig eller om mer information krävs. Detta skiljer sig från traditionell RAG, där dokument hämtas enligt fördefinierade regler för varje förfrågan.